Network operators have long relied on Deep Packet Inspection (DPI) equipment for almost two decades. Able to inspect the packet payloads of network traffic, it has become a common and useful method for areas such as detecting malicious traffic, preventing data leakage and applying QoS to different traffic categories. It also provides packet level visibility by way of reporting the packets traversing the network.

As a result, deep packet inspection has been deployed within telecom and cable infrastructure for security, traffic engineering and diagnostic purposes. Having expanded its adoption over time, it’s fair to say that most of the large and many mid-size telecom and cable network infrastructure have deployed DPI, to some extent, within their IP networks.

Deep packet inspection was conceived well before the security attack envelope changed. For example, a signature-based approach to security is no longer enough on its own. Next-gen approaches able to apply AI/ML are also beneficial to detect suspicious behavior. Similarly, DPI was prominent before the advent of new big data analytics technology came to bear. Modern architectures associated with big data analytics that can be applied to make sense of the enormous amounts of subscribers, web domains, games and video/OTT content that traverse over the network.

Deep packet inspection also remains expensive. The hardware required to inspect packets, at network speed, is costly as network bandwidth continue to rise – which also poses questions about its scalability for the future. In addition, with the rapidly increasing amount of encrypted digital media and web traffic delivered on the Internet, DPI has visibility limitations into how deep it can actually inspect. It’s clear that DPI has been stretched to its limit in attempt to be used beyond what it was originally intended to do.

The shortcomings of deep packet inspection have put a lot of pressure on network operators and architects. The ability to differentiate on network quality is especially difficult nowadays, especially within the major markets where most subscribers reside. Analytics has become the new battleground to gain a competitive advantage. Analytics that are able to provide differentiated services, superior customer support and actionable insight to attract and delight subscribers. With the objective of maximizing subscriber revenue at the lowest operating cost.

Marketing wants to know how to acquire new customers, and personalize product bundles. Support wants to be able to enforce new tier-based billing plans and provide a level of self-service for customers. The business wants to be able to predict level of subscriber satisfaction as a means to anticipate and take proactive action for those about to churn. Security wants to investigate suspicious activity that might be resulting in revenue loss such as pirated content distribution. And the network team needs insight into how to most efficiently leverage their network capacity and deploy new capital in the areas that will benefit the most. Lots of questions to help run a profitable business… but unfortunately few answers are gleaned from DPI reports.

The longstanding DPI investments that have been made aren’t able to extract deep intelligence the from data that traverses the network. So there is a reluctance to make new investments and upgrades to DPI hardware as they expand their networks. And a strong desire to evaluate alternative analytical solutions that can help solve their most pressing problems circling the business.

Turns out, solving the big data analytics challenge for network operators is quite demanding. In such a way that even many of the prominent SQL and NoSQL solutions from the last few years haven’t been able to rise to the challenge. This is because of the massive scale & performance needed for useful analytics within telco and cable infrastructure.

Big data for network operators requires the ability to intake millions of NetFlow or IPFIX events, being generated by millions of subscribers, every second. Each event needs to be correlated, in real-time, with subscriber identity, DNS and DHCP records in-order to provide an enriched understanding of every event. Data needs to be retained for at-least a year which translates to more than a trillion events in the system. And all sorts of queries – not only reporting and analytic-type queries – but also ad hoc and forensic ones – must be responded in seconds.

We saw the need to help communication service providers gain a competitive advantage by developing a turnkey application that meets their specific requirements for subscriber analytics. We’ve applied our unique patented, IP that reimagines the way data is accessed and stored — to achieve the performance, scale and retention that’s required to be useful. We call this our Subscriber Analytics product designed specifically for CSPs which has been built on-top of our core distributed analytics software. With a fully relational SQL database, coupled with industry standard interfaces – network operators and business stakeholders can use their developer tools and 3rd party BI tools of choice, to access any underlying data and get answers to what’s needed to run the business.

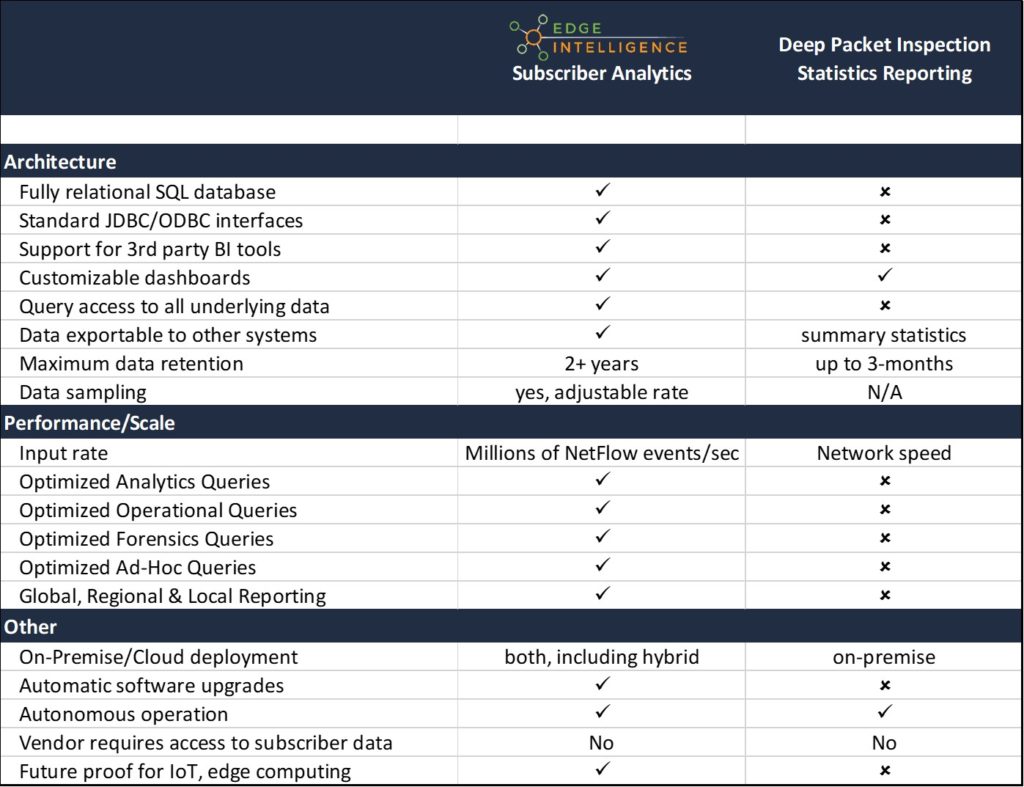

The following table compares Edge Intelligence Subscriber Analytics with a DPI Network Analytics approach:

The need for per-subscriber visibility within communication service provider networks has never been greater. Some modern alternatives to DPI reporting, based on big data approaches, can offer far greater insight into subscriber activity at a fraction of the cost. Contact Us if you would like to see a demo of Subscriber Analytics. We have built a demo environment to demonstrate functionality and how easily insight can be obtained using industry standard tools and dashboards. Also review our Top 10 Considerations when evaluating a solution provider for Subscriber Analytics.

Neil Cohen is the VP of Marketing at Edge Intelligence – posted on November 5th 2018